I still had some nagging thoughts after my recent examination of the distribution of Elo. In that blog post, I recognized that a higher probability of a draw would lead to tighter standard error around the true 'ability' of a player, as estimated by an Elo ranking. Without any data, I punted on what that probability should be. So I decided to look at some real data.

I started working in a risk role about a year ago. Compared to my previous gig, there is a much greater focus on discrete event modeling than on continuous outcomes. Logistic regression and survival analysis are the tools of the trade. However, financial risk modeling is more complex than the textbook presentation of these methods. As is chess. A loan holder might go bankrupt, stop paying, die, etc. Similarly, a chess player might win, lose or draw.

There are two main ways of approaching multiple outcome discrete models that leverage the simpler binary models: the competing hazards view, and the sequential hazards view. Briefly, risk under competing hazards would be like traversing the Fire Swamp: at any time, the spurting flames, the lightning sand or the rodents of unusual size might harm you. The risks all come at you at once. An example of a sequential hazard is undergoing surgery: you might die in surgery, and if you survive you might incur an infection and die of complications; the risks present themselves conditional on surviving other risks. (Both of these views are mostly just conveniences, and real risks are never so neatly defined.)

Returning to chess, I will consider sequential hazards. Assume two players, and let the difference in true abilities between them be denoted \(\Delta a\). As with Elo, we want the difference in abilities is such that the odds that the first player wins a match between them is \(10^{\Delta a / 400}\). But we have to consider ties. So instead, let \(E_A\) be the expected value of the game from the viewpoint of player \(A\). That is 1 times the probability of an outright for \(A\) win plus one-half the probability of a draw. Now we want

Now suppose that \(p_1\) is the probability of an outright victory for \(A\), and \(p_2\) is the probability of a draw, conditional on \(A\) not achieving victory. These two are related to \(E_A\) by

Moreover, the variance of the outcome is also a function of \(p_1, p_2\). We need the variance to talk about the noise in the Elo rating.

If one had the outcomes from a bunch of games where the true abilities of the players were known, one would then proceed as follows:

- Use logistic regression to estimate \(\log \frac{p_1}{1-p_1}\) as a function of the abilities of the players. The 'True' events are those where the first player wins, while 'False' are losses and draws.

- Use logistic regression to estimate \(\log \frac{p_2}{1-p_2}\) as a function of the abilities of the players. The 'True' events are those where the game was a draw, while the 'False' events are those where the first player loses. This second logistic regression has a smaller sample size than the first, and they have correlated errors.

Note however, that we are to keep the calibration of \(E_A\) in terms of \(\Delta a\) we don't actually have to perform the second logistic regression. That is, if \(E_A\) and \(p_1\) are known, we can compute \(p_2\).

Data Please

Enough of the talky bits, let's look at some data. I put together some scripts for downloading, extracting and processing some pgn data from the top-5000 site. The upstream data has the outcomes of nearly 2 million games played. I restrict my attention to games where the Elo of both players was measured at the time the match was played, which brings my sample down to 1179200 games played. Here I load the data, then perform some transforms to compute the mean 'level' of the game (Grandmaster, FIDE master, Expert, or other) based on average Elo, and then detect whether the win and loss conditions.

suppressMessages({

library(readr)

library(dplyr)

library(tidyr)

library(forcats)

})

indat <- readr::read_csv('../data/millionbase-2.22_summa.csv.gz',

col_types=cols(Year = col_integer(),

deltaElo = col_integer(),

meanElo = col_integer(),

result = col_double()))

sdat <- indat %>%

filter(!is.na(deltaElo),!is.na(meanElo),!is.na(result)) %>%

filter(deltaElo <= 400) %>%

mutate(skills=ifelse(meanElo > 2500,"GM", # the more tidy way is via case_when

ifelse(meanElo > 2300,"FM",

ifelse(meanElo > 2000,"Expert","other")))) %>%

mutate(skills=forcats::fct_reorder(factor(skills),meanElo,.desc=TRUE)) %>%

mutate(is_tie=(result==0.5),

is_win=(result==1.0))

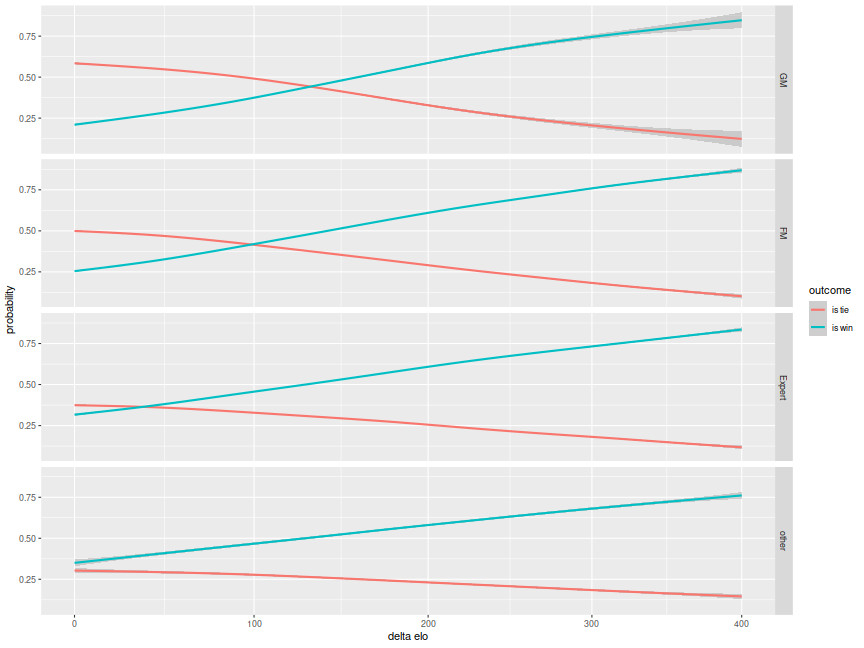

Now I plot the data. I use a non-parametric fit to give a smooth estimate of the probability of a win, and the probability of a tie, from the point of view of the player with the higher Elo rating, as a function of Elo. I plot facets for the four different 'levels' of play. Note that the probability of a tie is unconditional on whether a win was had for the first player.

library(ggplot2)

library(scales)

# scales: elo logit

elogit <- scales::trans_new('inverse elo logit',function(x) { 1 / (1 + exp(-x/400)) },function(y) { 400*log(y/(1-y)) })

# plot it

ph <- sdat %>%

tidyr::gather(key='iswhat',value='yesno',is_tie,is_win) %>%

mutate(iswhat=gsub('_',' ',iswhat),yesno=as.numeric(yesno)) %>%

ggplot(aes(x=deltaElo,y=yesno,color=iswhat)) +

stat_smooth() +

facet_grid(skills ~ .) +

scale_x_continuous(trans=elogit) +

labs(x='delta elo',y='probability',color='outcome')

print(ph)

The pattern you see is an increased chance of a draw at higher levels of play. For two equally matched Grandmasters, the probability of a tie is even greater than one half. This should match one's experience observing championship tournaments, where it feels like all the matches end in a draw. It is not clear which way the causality goes with this: it could be that players more likely to draw will experience fewer hits to their Elo and so make it to the Grandmaster level; the less cynical interpretation is that better players are better able to avoid a loss. Maybe those aren't really different explanations for the effect.

Whatever the explanation, this does introduce a complication into the rating systems: previously one only needed the difference in abilities to estimate \(E_A\). Thus a rating could only be defined up to some arbitrary additive constant (kind of like the operation of taking an indefinite integral). The additive constant problem was 'solved' by starting all players at 1500 and letting them basically fight each other for Elo points. This seems insensitive to changes in the overall pool (causing inflation or deflation, perhaps), and does not seem like a good way to compare players across eras.

However, the plot above seems to suggest there is a measurable effect that depends not on the difference in abilities, but in the average ability. Using this marker would in effect 'anchor' a rating system, a feature that Elo lacks. However, it presents a degree of freedom that we would have to fill somehow. That is, just as Elo was defined somewhat arbitrarily to scale decades every 400 points, we can define our anchor point based on a certain probability of a tie. From eyeballing the plot it would be something like the following: "a one half probability of an outright win for either player occurs when two players both of rating 2400 play."

I shudder to think of the perverse incentives that might arise from players being rated under such a system, as it might encourage more junior players to settle for a draw if it would lift both their ratings. This is a topic for another time. First let us see if these ideas jibe with the data.

Next Logistic

Here I perform the first logistic regression, and print its summary.

mod1 <- glm(is_win ~ deltaElo + meanElo,data=sdat,family=binomial())

print(summary(mod1))

##

## Call:

## glm(formula = is_win ~ deltaElo + meanElo, family = binomial(),

## data = sdat)

##

## Deviance Residuals:

## Min 1Q Median 3Q Max

## -2.09 -1.03 -0.79 1.13 1.71

##

## Coefficients:

## Estimate Std. Error z value Pr(>|z|)

## (Intercept) -0.019787 0.026946 -0.73 0.46

## deltaElo 0.006867 0.000024 286.22 <2e-16 ***

## meanElo -0.000421 0.000011 -38.17 <2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## (Dispersion parameter for binomial family taken to be 1)

##

## Null deviance: 1574897 on 1137821 degrees of freedom

## Residual deviance: 1465087 on 1137819 degrees of freedom

## AIC: 1465093

##

## Number of Fisher Scoring iterations: 4

# sequential hazard:

# mod2 <- glm(is_tie ~ deltaElo + meanElo,data=sdat %>% filter(!is_win),family=binomial())

is_2400 <- (log(1/3) - coef(mod1)['(Intercept)']) / (coef(mod1)['meanElo'])

We see that both the difference in ability, and the outright mean ability are significant in this logistic regression. The sign on the mean ability coefficient is negative: better players are less likely to secure an outright win, all things equal, but the effect is small. We can compute the 'anchor' point by finding the rating at which the probability of a win by the first player is exactly one quarter: this corresponds to one to three odds, and we estimate that anchor point as an Elo of 2560.077, not terribly far from our rough estimate of 2400.

This suggests the following definitions:

where \(\mu_a\) is the average ability. Then define \(p_2\) as

and, as always,

The variance of the outcome, from the first player's perspective, is then

This number can then feed into an estimate of standard error around Elo ratings, which will have to wait for a future blog post.