Atomic Openings

I've started playing a variant of chess called Atomic. The pieces move like traditional chess, and start in the same position. In this variant, however, when a piece takes another piece, both are removed from the board, as well as any non-pawn pieces on the (up to eight) adjacent squares. As a consequence of this one change, the game can end if your King is 'blown up' by your opponent's capture. As another consequence, Kings cannot capture, and may occupy adjacent squares.

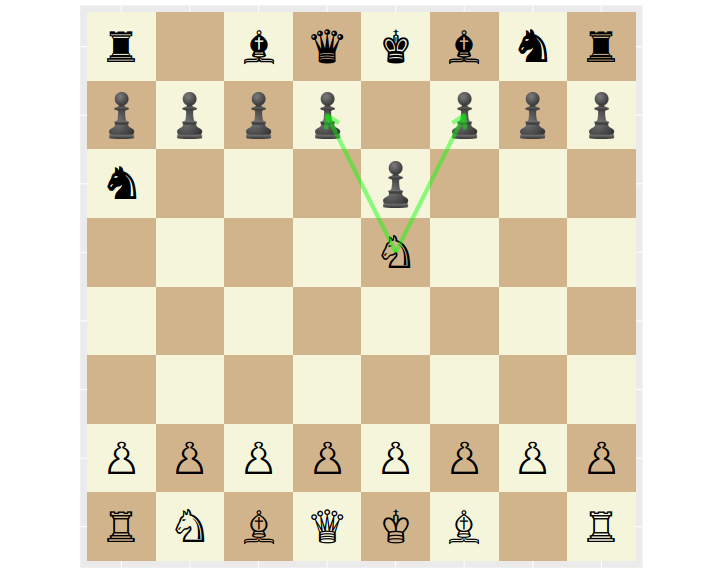

For example, from the following position White's Knight can blow up the pawns at either d7 or f7, blowing up the Black King and ending the game.

I looked around for some resources on Atomic chess, but have never had luck with traditional chess studies. Instead I decided to learn about Atomic statistically.

As it happens, Lichess (which is truly a great site) publishes their game data which includes over 9 million Atomic games played. I wrote some code that will download and parse this data, turning it into a CSV file. You can download v1 of this file, but Lichess is the ultimate copyright holder.

First steps

The games in the dataset end in one of three conditions: Normal (checkmate or what passes for it in Atomic), Time forfeit, and Abandoned (game terminated before it began). The last category is very rare, and I omit these from my processing. The majority of games end in the Normal way, as tabulated here:

| termination | n |

|---|---|

| Normal | 8426052 |

| Time forfeit | 1257295 |

The game data includes Elo scores for players, as computed prior to the game. As a first check, I wanted to see if Elo is properly calibrated. To do this, I compute the empirical win rate of White over Black, grouped by bins of the difference in their Elo …

read more